install.packages("tidyverse")

install.packages("tidytuesdayR")Project 2

Background

Due date: Sept 30 at 11:59pm

The goal of this assignment is to practice designing and writing functions along with practicing our tidyverse skills that we learned in our previous project. Writing functions involves thinking about how code should be divided up and what the interface/arguments should be. In addition, you need to think about what the function will return as output.

To submit your project

Please write up your project using R Markdown and processed with knitr. Compile your document as an HTML file and submit your HTML file to the dropbox on Courseplus. Please show all your code (i.e. make sure to set echo = TRUE) for each of the answers to each part.

Install packages

Before attempting this assignment, you should first install the following packages, if they are not already installed:

Part 1: Fun with functions

In this part, we are going to practice creating functions.

Part 1A: Exponential transformation

The exponential of a number can be written as an infinite series expansion of the form $$ (x) = 1 + x + + +

$$ Of course, we cannot compute an infinite series by the end of this term and so we must truncate it at a certain point in the series. The truncated sum of terms represents an approximation to the true exponential, but the approximation may be usable.

Write a function that computes the exponential of a number using the truncated series expansion. The function should take two arguments:

x: the number to be exponentiatedk: the number of terms to be used in the series expansion beyond the constant 1. The value ofkis always \(\geq 1\).

For example, if \(k = 1\), then the Exp function should return the number \(1 + x\). If \(k = 2\), then you should return the number \(1 + x + x^2/2!\).

Include at least one example of output using your function.

You can assume that the input value

xwill always be a single number.You can assume that the value

kwill always be an integer \(\geq 1\).Do not use the

exp()function in R.The

factorial()function can be used to compute factorials.

Exp <- function(x, k) {

# Add your solution here

}Part 1B: Sample mean and sample standard deviation

Next, write two functions called sample_mean() and sample_sd() that takes as input a vector of data of length \(N\) and calculates the sample average and sample standard deviation for the set of \(N\) observations.

$$

{x} = _{i=1}^n x_i

\[ \]

s =

$$ Include at least one example of output using your functions.

You can assume that the input value

xwill always be a vector of numbers of length N.Do not use the

mean()andsd()functions in R.

sample_mean <- function(x) {

# Add your solution here

}

sample_sd <- function(x) {

# Add your solution here

}Part 1C: Confidence intervals

Next, write a function called calculate_CI() that:

There should be two inputs to the

calculate_CI(). First, it should take as input a vector of data of length \(N\). Second, the function should also have aconf(\(=1-\alpha\)) argument that allows the confidence interval to be adapted for different \(\alpha\).Calculates a confidence interval (CI) (e.g. a 95% CI) for the estimate of the mean in the population. If you are not familiar with confidence intervals, it is an interval that contains the population parameter with probability \(1-\alpha\) taking on this form

$$

{x} t_{/2, N-1} s_{{x}}

$$

where \(t_{\alpha/2, N-1}\) is the value needed to generate an area of \(\alpha / 2\) in each tail of the \(t\)-distribution with \(N-1\) degrees of freedom and \(s_{\bar{x}} = \frac{s}{\sqrt{N}}\) is the standard error of the mean. For example, if we pick a 95% confidence interval and \(N\)=50, then you can calculate \(t_{\alpha/2, N-1}\) as

alpha <- 1 - 0.95

degrees_freedom = 50 - 1

t_score = qt(p=alpha/2, df=degrees_freedom, lower.tail=FALSE)- Returns a named vector of length 2, where the first value is the

lower_bound, the second value is theupper_bound.

calculate_CI <- function(x, conf = 0.95) {

# Add your solution here

}Include example of output from your function showing the output when using two different levels of conf.

If you want to check if your function output matches an existing function in R, consider a vector \(x\) of length \(N\) and see if the following two code chunks match.

calculate_CI(x, conf = 0.95)dat = data.frame(x=x)

fit <- lm(x ~ 1, dat)

# Calculate a 95% confidence interval

confint(fit, level=0.95)Part 2: Wrangling data

In this part, we will practice our wrangling skills with the tidyverse that we learned about in module 1.

Data

The two datasets for this part of the assignment comes from TidyTuesday. Specifically, we will use the following data from January 2020, which I have provided for you below:

tuesdata <- tidytuesdayR::tt_load('2020-01-07')

rainfall <- tuesdata$rainfall

temperature <- tuesdata$temperatureHowever, to avoid re-downloading data, we will check to see if those files already exist using an if() statement:

library(here)

if(!file.exists(here("data","tuesdata_rainfall.RDS"))){

tuesdata <- tidytuesdayR::tt_load('2020-01-07')

rainfall <- tuesdata$rainfall

temperature <- tuesdata$temperature

# save the files to RDS objects

saveRDS(tuesdata$rainfall, file = here("data","tuesdata_rainfall.RDS"))

saveRDS(tuesdata$temperature, file = here("data","tuesdata_temperature.RDS"))

}The above code will only run if it cannot find the path to the tuesdata_rainfall.RDS on your computer. Then, we can just read in these files every time we knit the R Markdown, instead of re-downloading them every time.

Let’s load the datasets

rainfall <- readRDS(here("data","tuesdata_rainfall.RDS"))

temperature <- readRDS(here("data","tuesdata_temperature.RDS"))Now we can look at the data with glimpse()

library(tidyverse)

glimpse(rainfall)Rows: 179,273

Columns: 11

$ station_code <chr> "009151", "009151", "009151", "009151", "009151", "009151…

$ city_name <chr> "Perth", "Perth", "Perth", "Perth", "Perth", "Perth", "Pe…

$ year <dbl> 1967, 1967, 1967, 1967, 1967, 1967, 1967, 1967, 1967, 196…

$ month <chr> "01", "01", "01", "01", "01", "01", "01", "01", "01", "01…

$ day <chr> "01", "02", "03", "04", "05", "06", "07", "08", "09", "10…

$ rainfall <dbl> NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

$ period <dbl> NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

$ quality <chr> NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

$ lat <dbl> -31.96, -31.96, -31.96, -31.96, -31.96, -31.96, -31.96, -…

$ long <dbl> 115.79, 115.79, 115.79, 115.79, 115.79, 115.79, 115.79, 1…

$ station_name <chr> "Subiaco Wastewater Treatment Plant", "Subiaco Wastewater…glimpse(temperature)Rows: 528,278

Columns: 5

$ city_name <chr> "PERTH", "PERTH", "PERTH", "PERTH", "PERTH", "PERTH", "PER…

$ date <date> 1910-01-01, 1910-01-02, 1910-01-03, 1910-01-04, 1910-01-0…

$ temperature <dbl> 26.7, 27.0, 27.5, 24.0, 24.8, 24.4, 25.3, 28.0, 32.6, 35.9…

$ temp_type <chr> "max", "max", "max", "max", "max", "max", "max", "max", "m…

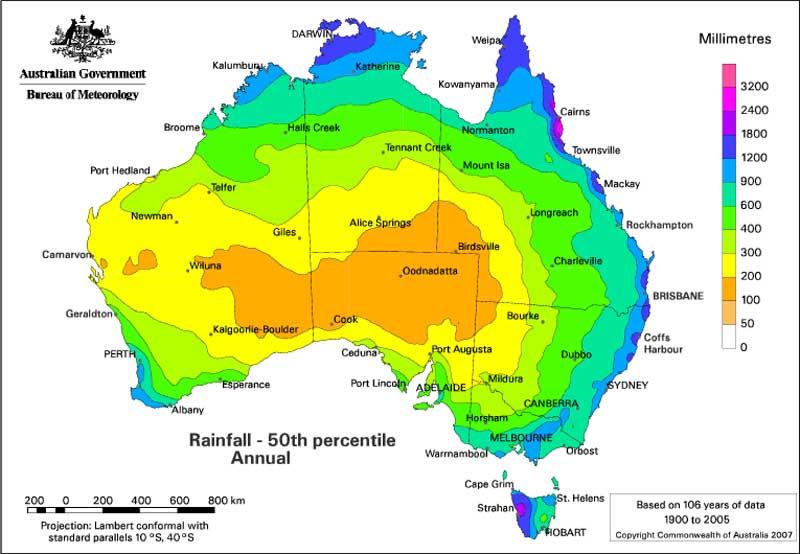

$ site_name <chr> "PERTH AIRPORT", "PERTH AIRPORT", "PERTH AIRPORT", "PERTH …If we look at the TidyTuesday github repo from 2020, we see this dataset contains temperature and rainfall data from Australia.

[Source: Geoscience Australia]

Here is a data dictionary for what all the column names mean:

Tasks

Using the rainfall and temperature data, perform the following steps and create a new data frame called df:

- Start with

rainfalldataset and drop any rows with NAs. - Create a new column titled

datethat combines the columnsyear,month,dayinto one column separated by “-”. (e.g. “2020-01-01”). This column should not be a character, but should be recognized as a date. (Hint: check out theymd()function inlubridateR package). You will also want to add a column that just keeps theyear. - Using the

city_namecolumn, convert the city names (character strings) to all upper case. - Join this wrangled rainfall dataset with the

temperaturedataset such that it includes only observations that are in both data frames. (Hint: there are two keys that you will need to join the two datasets together). (Hint: If all has gone well thus far, you should have a dataset with 83,964 rows and 13 columns).

- You may need to use functions outside these packages to obtain this result, in particular you may find the functions

drop_na()fromtidyrandstr_to_upper()function fromstringruseful.

# Add your solution herePart 3: Data visualization

In this part, we will practice our ggplot2 plotting skills within the tidyverse starting with our wrangled df data from Part 2. For full credit in this part (and for all plots that you make), your plots should include:

- An overall title for the plot and a subtitle summarizing key trends that you found. Also include a caption in the figure.

- There should be an informative x-axis and y-axis label.

Consider playing around with the theme() function to make the figure shine, including playing with background colors, font, etc.

Part 3A: Plotting temperature data over time

Use the functions in ggplot2 package to make a line plot of the max and min temperature (y-axis) over time (x-axis) for each city in our wrangled data from Part 2. You should only consider years 2014 and onwards. For full credit, your plot should include:

- For a given city, the min and max temperature should both appear on the plot, but they should be two different colors.

- Use a facet function to facet by

city_nameto show all cities in one figure.

# Add your solution herePart 3B: Plotting rainfall over time

Here we want to explore the distribution of rainfall (log scale) with histograms for a given city (indicated by the city_name column) for a given year (indicated by the year column) so we can make some exploratory plots of the data.

You are again using the wrangled data from Part 2.

The following code plots the data from one city (city_name == "PERTH") in a given year (year == 2000).

df %>%

filter(city_name == "PERTH", year == 2000) %>%

ggplot(aes(log(rainfall))) +

geom_histogram()While this code is useful, it only provides us information on one city in one year. We could cut and paste this code to look at other cities/years, but that can be error prone and just plain messy.

The aim here is to design and implement a function that can be re-used to visualize all of the data in this dataset.

There are 2 aspects that may vary in the dataset: The city_name and the year. Note that not all combinations of

city_nameandyearhave measurements.Your function should take as input two arguments city_name and year.

Given the input from the user, your function should return a single histogram for that input. Furthermore, the data should be readable on that plot so that it is in fact useful. It should be possible visualize the entire dataset with your function (through repeated calls to your function).

If the user enters an input that does not exist in the dataset, your function should catch that and report an error (via the

stop()function).

For this section,

Write a short description of how you chose to design your function and why.

Present the code for your function in the R markdown document.

Include at least one example of output from your function.

# Add your solution herePart 4: Apply functions and plot

Part 4A: Tasks

In this part, we will apply the functions we wrote in Part 1 to our rainfall data starting with our wrangled df data from Part 2.

- First, filter for only years including 2014 and onwards.

- For a given city and for a given year, calculate the sample mean (using your function

sample_mean()), the sample standard deviation (using your functionsample_sd()), and a 95% confidence interval for the average rainfall (using your functioncalculate_CI()). Specifically, you should add two columns in this summarized dataset: a column titledlower_boundand a column titledupper_boundcontaining the lower and upper bounds for you CI that you calculated (using your functioncalculate_CI()). - Call this summarized dataset

rain_df.

# Add your solution herePart 4B: Tasks

Using the rain_df, plots the estimates of mean rainfall and the 95% confidence intervals on the same plot. There should be a separate faceted plot for each city. Think about using ggplot() with both geom_point() (and geom_line() to connect the points) for the means and geom_errorbar() for the lower and upper bounds of the confidence interval.

# Add your solution here